Many teams adopted Scrum as part of becoming “agile”.

This will start by bringing in consultants and hiring Scrum masters.

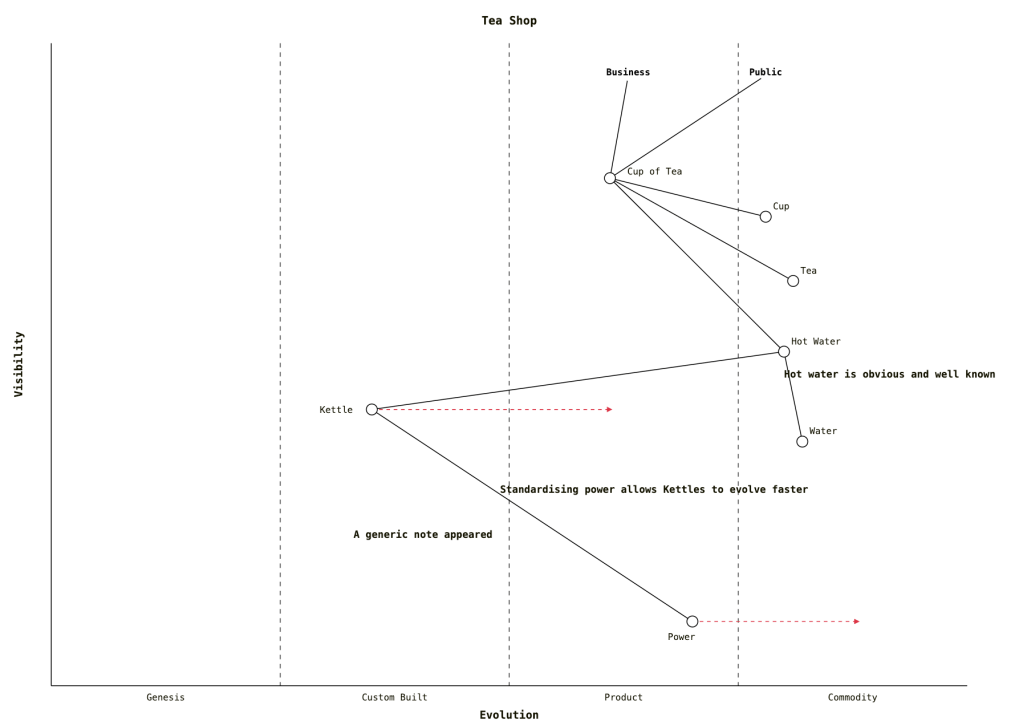

The starting point will look like this:

You have 2 week sprints.

These will include the following meetings:

- Daily standups (Each workday)

- Sprint Planning (every 2 weeks

- Refinement Meetings (every 1 -2 weeks)

- Retrospective (every 2 weeks)

- Sprint Demo (every 2 weeks)

Teams attempt to complete every task that they agreed to within the 2 week window.

Does this seem familiar?

Is it working for you?

How many times do you find things roll over to the next sprint?

How many times do you need to change what you are doing within a sprint?

How frequently do you run out of time in the Refinement Meetings?

How much time is tied up in these agile cermonies?

The SCRUM technique came out of the Extreme Programming practices. It order to be able to be taught in 2 days it dropped the technical practices (pair programming, continuous integration, TDD, simple design …)

The basic cycle is sensible. This is the basic Observe, Orient, Decide, Act loop.

It needs to be applied at several levels.

The basic SCRUM settings are the default. To be effective you need to adapt them. You probably have never been told that you can change the process.

If you are having to change what is in the sprint frequently start by reducing the sprint length to 1 week.

Retros will have to happen every other week. The other meetings become shorter as you are now handling a smaller list of things to handle. You will be deploying functional units more frequently and getting better feedback. Disruptive business requests normally be satisfied with “can it wait until next week?” If not then you have a true emergency that you would have to stop for.

Once you have a 1 week Sprint it is not a big step to move to Kanban. It this you focus on the next important thing and work on getting that finished. This means that refinements become even simpler as you have one thing to work on. You can even have a task that is the analysis that will generate the backlog. Kanban is all about eliminating waste. Do what you need to fix the top task then move to the next.

Kanban needs to be performed at a sustainable pace.

There are technical challenges – how do I break everything down into small valuable increments.

The benefit of the fast feedback is useful – you can get to the point of multiple small releases per day.